ARC-AGI-3: The $10,000 Challenge. Artificial intelligence is advancing… | by Michal Mikulasi | Medium

Sign up

Get app

Sign up

ARC-AGI-3: The $10,000 Challenge

Follow

2 min read

·

Aug 18, 2025

Share

Press enter or click to view image in full size

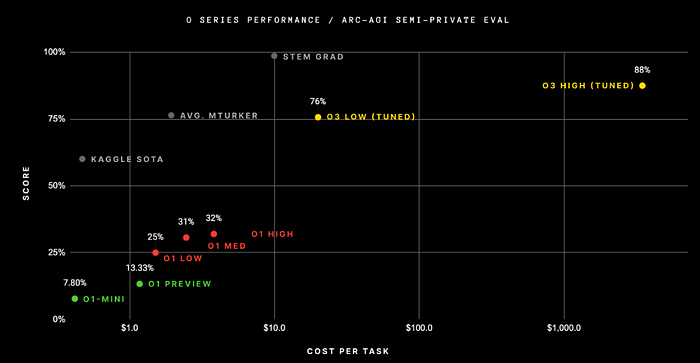

Artificial intelligence is advancing fast, but a new benchmark called ARC-AGI-3 reminds us just how far today’s systems are from true general intelligence. Designed by François Chollet, creator of the original ARC test, this benchmark doesn’t just measure knowledge or pattern recognition; it measures the ability to learn from scratch.

What Makes ARC-AGI-3 Different?

Unlike traditional benchmarks that test models on static data, ARC-AGI-3 introduces interactive grid-world mini-games. Here, an AI agent must figure out the rules of the game by exploring, experimenting, and planning, just as a child would when encountering something new.

For humans, the challenge is surprisingly simple. Most people can solve these games within minutes. But for AI? The results are humbling. Current agents score zero points, failing even the developer preview of just three games.

Chollet highlights the gap: “AI can do many things, but it cannot have general intelligence as long as this fundamental divide exists.”

The Human vs. Machine Gap

The benchmark excludes trivia, cultural knowledge, or linguistic tricks. Instead, it focuses on core cognitive skills like causality, object permanence, and flexible problem-solving. These are areas where humans excel intuitively but where machines still struggle.

Get Michal Mikulasi’s stories in your inbox

Join Medium for free to get updates from this writer.

Subscribe

Subscribe

- [x]

Remember me for faster sign in

Interestingly, there is a glimmer of hope. OpenAI researcher Sun reported that a new ChatGPT agent was able to solve the first game in the preview. Progress? Yes, but far from a breakthrough.

A $10,000 Incentive to Push AI Forward

To encourage innovation, Hugging Face is sponsoring a four-week coding sprint with a $10,000 prize pool. Participants can develop their own AI agents and submit them via a public API. The full benchmark, including around 100 games, is expected to launch in early 2026, split into public and private test sets.

The idea is simple: move AI forward not just in narrow tasks, but in general learning ability,a critical step toward artificial general intelligence (AGI).

Why This Matters

AI benchmarks are plentiful, but ARC-AGI-3 stands out because it doesn’t reward memorization or pre-trained knowledge. Instead, it forces systems to interact, adapt, and learn without prior context.

For researchers, it’s a reminder that while today’s AI models can dazzle us with fluent text, stunning images, or code generation, they’re still lacking the kind of flexible, adaptive intelligence that even small children take for granted.

ARC-AGI-3 may not have all the answers, but it asks the right questions, and that could be the key to the next leap in AI development.

Let’s Collaborate!

I’m currently open to exciting opportunities in AI, robotics, and tech writing.

If you’d like to work together, discuss a project, or share ideas, feel free to connect with me on LinkedIn or reach out via email: michalmikulasi@gmail.com.

Also, please consider supporting me on:

https://buy.stripe.com/fZu14nffsgn85p67o43Ru00

Follow

Machine learning/Data science/AI specialist

Follow

No responses yet

Write a response

Cancel

Respond

More from Michal Mikulasi

Aug 3, 2025

Apr 28, 2025

Apr 28, 2025

Aug 1, 2025

Recommended from Medium

In

by

Oct 20, 2025

In

by

Dec 28, 2025

In

by

Dec 1, 2025

## You Have No Idea How Far Behind Tesla Is ### The leapfrog moment has just happened.

6d ago

In

by

Jan 14

In

by

Feb 6